| result | count | explanation |

|---|---|---|

ACCEPT | 23,001 |

The server accepted dummy@spf-all.com. |

SMTPNOCONN | 791 | No good connection to port 25 could be established. |

NXDOMAIN | 287 | DNS lookup of domain failed |

R-ON-FAIL | 221 |

The server rejected dummy@spf-all.com but accepted a non SPF-tainted sender after RSET. |

NONE | 156 |

The server rejected dummy@spf-all.com and didn't accept RSET.

Possibly, the server dropped the connection right away. |

SERVFAIL | 153 | DNS lookup of domain failed |

SMTPERROR | 151 | A connection was established, but the SMTP greeting code was not 220. |

OTHER | 54 |

The server neither accepted nor rejected dummy@spf-all.com. This includes some kind of greylisting. |

REJECT | 31 |

The server rejected both dummy@spf-all.com as well as the non SPF-tainted sender after RSET. |

REJECTHELO | 8 | The server did not accept the HELO command. 5 servers mentioned greylisting explicitly. |

The exiguous number of domains deploying SPF's reject-on-fail feature seems to be at odds with the almost 50% awareness implied by the presence of some type of SPF record in the reachable domains. (The following table roughly agrees with the results of the IETF's SPF survey.)

| spf_type | count | explanation |

|---|---|---|

| NONE | 12,048 |

Domains that publish no usable SPF policy, including 572 permerror/temperror cases. |

| TXT | 10,915 | Domains that publish SPF policies using the TXT RRTYPE. |

| NULL | 1,231 |

All and only those domains with connection problems (result=NXDOMAIN, SERVFAIL, or SMTPNOCONN). |

| BOTH | 587 | Domains that publish a policy using both TXT and SPF RRTYPEs consistently. |

| SPF | 43 | Domains that publish SPF policies using the SPF RRTYPE only. |

| VARY | 20 | Domains that publish a different policy for each RRTYPE. |

| BAD | 9 | Domains that caused an exception during the evaluation of their TXT policy. |

SPF-aware domains can publish loose or strict policies, the former are about twice as many as the latter. A noticeable number of maybe-aware domains publish invalid policies. In the following table, the evaluation was done using TXT RRTYPEs only, against an unused test-address (203.0.113.99).

| spf_default | count | explanation |

|---|---|---|

| none | 11,515 | Domains that don't publish a TXT SPF record. |

| softfail or neutral | 6,476 |

Loose policies, ending in either ~all (4,966) or ?all (1,510). |

| fail | 3,816 |

Policies ending in -all, the strict ones. |

| permerror or temperror | 1,713 | All possible failures, either permanent (1,663) or temporary (50). |

| NULL | 1,240 | All and only those domains with SPF problems (spf_type=NULL, BAD). |

| pass | 93 |

Policies ending in +all (or just all, since + is the default qualifier). |

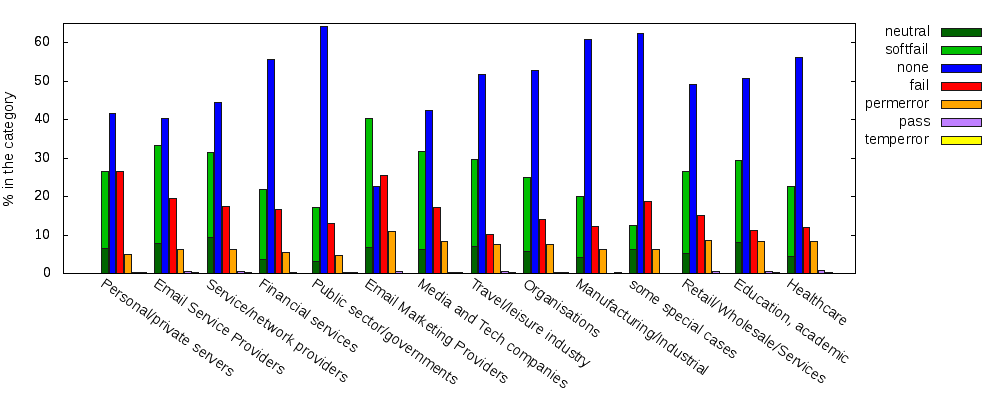

Those results can be broken down according to DNSWL's classification. DNSWL assigns a category and a score to each IP block it lists, thus one can derive a list of categories and a list of scores for each domain name. (I arbitrarily re-categorized[*] the 17 domains having two categories; none has more.) I discarded the problematic NULL results.

X-axis order: categories are arranged according to their ability to

provide the receiving server with a meaningful SPF result. That is,

the ratio of permerror/ valid, increasing left to right,

where valid is any of fail, softfail,

neutral or pass.

The resulting plot shows two facts very clearly:

-

Except for Email Marketing Providers (cat. 15),

nonedominates, followed by loose policies (neutral+softfail) except in some special cases (cat. 10). -

While

passandtemperrorare nearly invisible, policies afflicted by permanent errors are rather common.

I think readers can quickly work out how the user-admin relationship is

characterized for each category, and thus explain why bald -all's are more

common in some categories than in others.

For example, Personal/private servers (cat. 6) obviously can control their

users more closely.

Fig. 1: Result of SPF evaluation by category

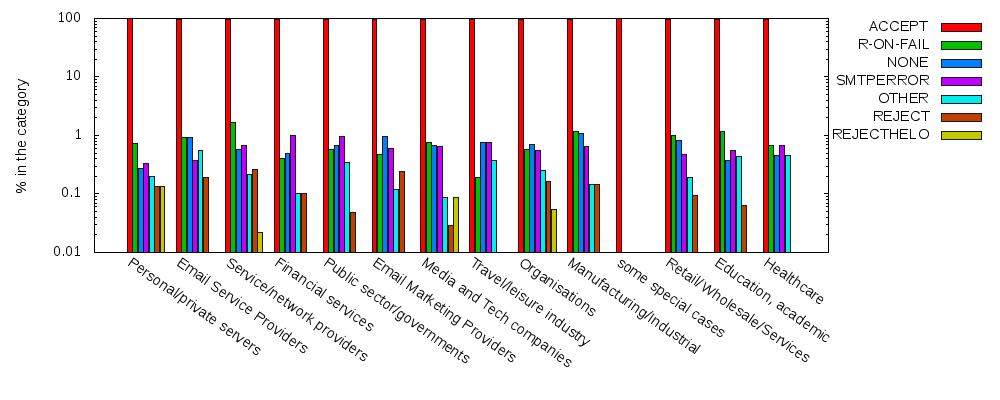

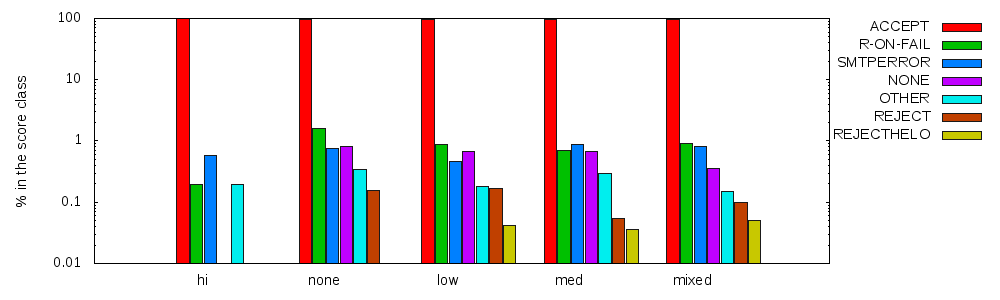

Now, for the corresponding behavior, the cases different from ACCEPT are so rare

that we have to resort to logarithmic scale just to be able to see them

--look at the numbers on the axis to see that the highest percentage of

reject-on-fail, found at Service/Network providers (cat. 5), is just

above 1%; 1.66% to be more precise.

Fig. 2: Reject/accept behavior by category

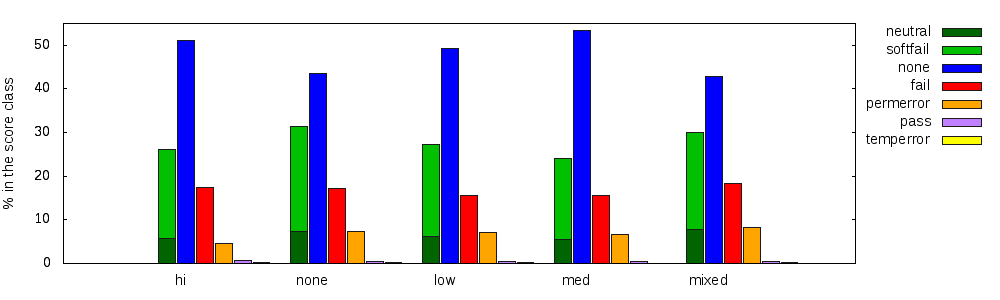

As for categories, the breakdown can be done by score classes.

I put the domains with multiple scores in their own mixed class.

Classes none, low, and med appear in an odd order, according to the SPF-oriented

x-axis order used.

Quite similar to one another, their permerror/ valid ratios are:

275permerror/ (277neutral + 919softfail + 653fail + 17pass) = 0.15,

835permerror/ (741neutral + 2470softfail + 1845fail + 46pass) = 0.16, and

365permerror/ (305neutral + 1020softfail + 858fail + 20pass) = 0.17 respectively.

Fig. 3: Result of SPF evaluation by score

Again, we also look at the fraction of those domains that deploy a behavior different different from ACCEPT.

Fig. 4: Reject/accept behavior by score

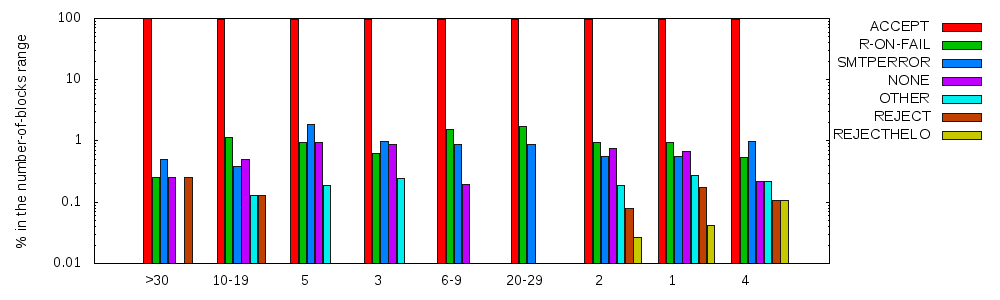

Finally, we can count how many blocks are listed for each domain, and use that number as an additional classification, presumably related to the domain's size. The SPF-oriented placement of small domains seems to be in contrast with the fact that Personal/private servers (cat. 6) is leading the categories (Fig. 1). Indeed, the 1561 domains in category 6 have an average of 1.2 blocks each; topped by one domain with seven blocks. However, only 9% of the single-block domains is in category 6.

Fig. 5: Result of SPF evaluation by no-of-blocks ranges

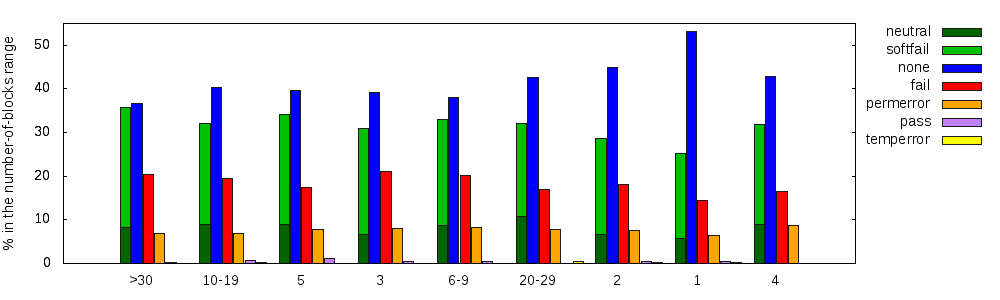

The class with 20-29 blocks features the top reject-on-fail percentage with 1.74%.

Fig. 6: Reject/accept behavior by no-of-blocks ranges